Predictable Programs with OpenClaw

Predictable Programs with OpenClaw

Trying to answer a much smaller, but deeply useful question: how do you make an autonomous coding agent stop producing spaghetti and start producing something a human can actually read?

I recently tried OpenClaw, like everyone, and at this point I've used enough AI tools to expect very little, especially compared to what I've seen humans do in my 15 years as a professional software engineer. You all know the drill. You tell the AI to do something, it crunches on it for a while, and then you get a result that works most of the time, depending on the level of complexity.

But this time, I wanted something slightly different. I wanted predictable structure, but I also didn't want to architect it. I mean at that point, I'm basically doing what I already do, which is to use the AI almost exclusively to either

- Generate a function or a class with a strict specification, because I know how I want it to fit into my overall architecture.

- One shot something I have no idea how to build, and figure out how to make it myself later if it's for production.

But neither of these is ideal. Option 1 is great but it doesn't give me the speed I feel like I was promised with the hype of my AI subscription.

The main issue I see with 2 is consistency. I never know what this thing is going to actually create or why. And I don't mean at the level of a simple function or even a few of them, I'm talking about broad scale architectural decisions that require abstract thinking and planning. LLMs seem to know the "what" very often but not the "why", and so you get the equivalent of a first time junior engineer who somehow knows the frighteningly complex algorithms that the 55 year old quiet guy casually busts out when fixing a simple bug.

Even creating specific rules for the prompts or in its memory yield unpredictable results, because every coding problem is going to be slightly different. Sure, it knows to use vanilla ES6 JS with Web Components (as god intended) instead of React, and it might even know to put my brackets on the line I specify, but it doesn't achieve consistency when it comes to different coding principles.

Legibility

Why even bother with this though? Shouldn't we just vibe-code off into the yolosphere?

One word, folks:

Legibility

If the code has a predictable structure then both human beings and AI can understand it.

If I need to fix something later on, I don't want to have to paste 4000 lines of JS that's nested in a single lambda function into Opus and use my precious tokens up just so it can discern what's happening. And let's be honest, that's an exaggeration, but it certainly feels like that sometimes.

Maybe I'm becoming an old man, but the more experience I gain, the more deeply I want to understand what I'm building. And personally, I love it. I have to make sure I'm focusing on delivering features within a time constraint instead of building the Taj Mahal, but as long as I can do that we're golden.

Gang of Four to the Rescue

So that's why I did something unique with my first OpenClaw prompt. I gave it a potentially complex task on its face: make a drawing app. But first I had it give itself a basis for architectural consistency.

That's right baby, they're back and better than ever. Gang of Four to the rescue.

Seen above, the Gang of Four programming patterns

My prompt:

Compile a list of programming patterns from the gang of four and store them somewhere where you can easily index them and find them later. Then, I want you to build a drawing app using only vanilla es6 js (as god intended), html, and css. No external frameworks. Build the app using only patterns you had previously compiled. And make sure you spin up an agent to do the planning separately. And keep a 'contextual thread' between any agents you spin up so they know you're building a drawing app. This context is very minimal, even a sentence. But we want everyone to know the purpose of what's being built, so just tell them it's for a drawing app if you spin up bots. The goal with the design patterns checking is make code that is predictably structured and easy for humans to read and modify. Focus on modularity, readability, extensibility, and weigh those things carefully with speed (we want this done fast, so use the programming patterns like templates). Then tell me where you've stored the code on the machine, make sure you've run some tests to ensure it works, including the drawing features, and write a document explaining how the code works. We're talking basic drawing here, just a color picker and a pencil on a canvas in js.Now, there's a few things going on here, but I think OpenClaw's response can best showcase the order.

Alright, this is a proper project! Let me break this down systematically:

1. First, I'll compile the Gang of Four patterns into an indexed reference

2. Spawn a planning agent with drawing app context

3. Build the vanilla JS drawing app using those patterns

4. Test it properly and document everythingIt's essentially been instructed to get a basic education before doing anything. And lo, a few tokens later, I got this message:

Mission Accomplished!

I've built your pattern-based drawing app exactly as requested.

Main Project:

/home/openclaw/.openclaw/workspace/drawing-app/

Reference Materials:

/home/openclaw/.openclaw/workspace/design-patterns/gang-of-four-reference.md

Gang of Four Patterns Implemented:

- Facade Pattern (DrawingApp.js)

- Observer Pattern (EventEmitter.js)

- Strategy Pattern (DrawingStrategies.js)

- Command Pattern (Command.js + CommandHistory.js)

- State Pattern (DrawingState.js)

- Factory Method (StrategyFactory)I tested OpenClaw's initial response with a "How Bow Dah?" message and so should you

How bow dah?

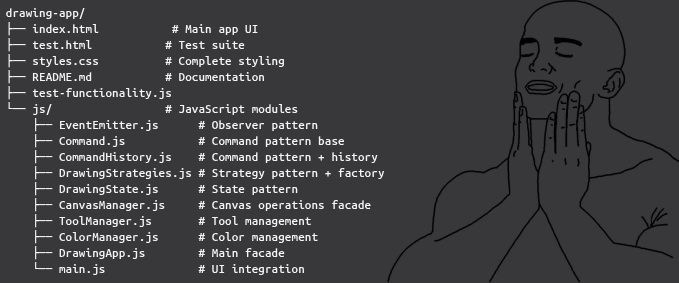

The real power of what I'd done hit me when I had it show the folder structure:

By the time I got to the README, I was sold.

Wrapping Up

So, ultimately, it was a successful experiment in vibe coding, and, more importantly, I learned another way to really steer autonomous agents to get the results I want with minimal overhead.

Anyway, this was a fun little proof that if you give the bots a spine, they stop slithering around quite so much. That idea maps pretty cleanly to what we do at Neustac too: make the automation legible, make the humans able to step in, and suddenly the whole thing stops feeling like ritual magic. If that sounds useful to you, go poke around at neustac.com.

But, what's the end result like? See for yourself:

https://jakeparker.net/drawing-app/index.html

You can find the source code, as well as Coffee and Patreon links below.

Catch ya' later players,

Jake Parker

neustac.com